How Can Companies Without an IT Department Use Web Scraping?

Companies without in-house IT can outsource the entire data pipeline - from scraper development and hosting to maintenance and delivery. We act as a full technical partner, handling infrastructure so your team focuses on insights, not tooling.

If you don’t have your own IT team, we can act as your technical partner. We also build

data-driven tools using our pipelines or external data providers. We create reports, detect trends,

and handle analytics end-to-end.

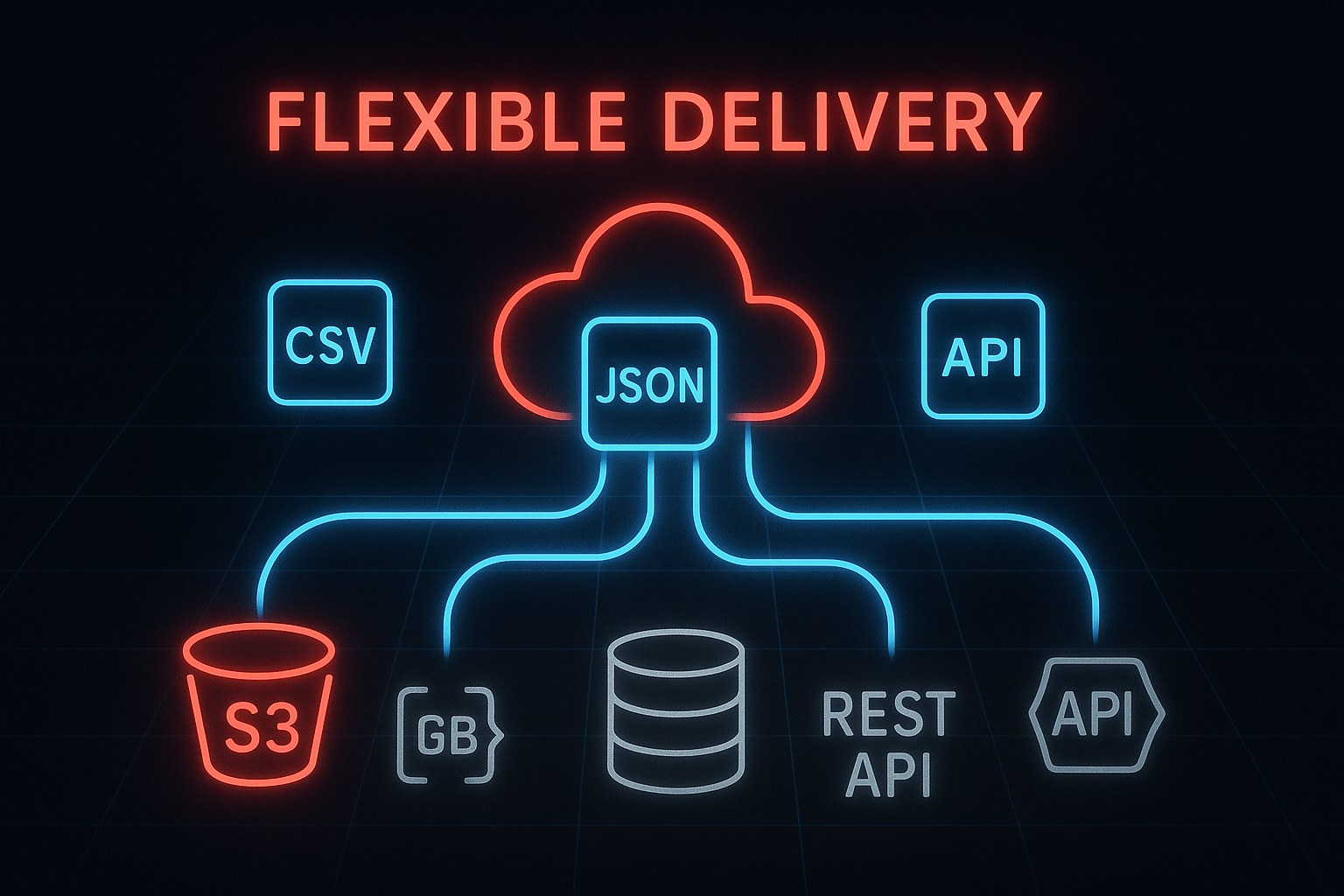

Turnkey delivery

Data delivered as JSON, CSV, Parquet or via Webhook integration - ready to plug directly into your data warehouse or BI tools.

Custom tools

We build internal dashboards, secure portals, and client-facing data products tailored to your operational needs.

Reports & analytics

Transform raw scraped data into actionable KPI reports, market overviews, and comprehensive competitive insights.

Trends & forecasting

Leverage historical data to fuel signal detection, time-series analysis, and anomaly alerts for predicting market shifts.

What Can a Custom Web Scraping Service Build for Your Business?

A custom web scraping service can build automated data extractors for any website, including JavaScript-heavy platforms using Playwright and Puppeteer. We bypass anti-bot protection systems including Cloudflare, DataDome, and PerimeterX, then deliver structured data in JSON, CSV or Parquet format within 24–72 hours of project kickoff.

-

Automated price & stock monitors with alerts and diffs

-

Review & sentiment pipelines for e-commerce and social

-

Job-market trackers (titles, salaries, hiring velocity)

-

Real-time APIs & webhooks with authentication and SLAs

-

Custom dashboards: CSV/JSON/Parquet → BI-ready

Delivery

CSV, JSON, Parquet, DB export, API, webhooks

Compliance

Public data only, GDPR-first, optional EU hosting

Quality

Schema validation, dedup, freshness & audit logs

Support

POC in 24–72h, maintenance & SLAs